The tools we use to build interfaces for the web browser are pretty low level, browsers currently expose no APIs to interpret a user’s gestures and there isn’t a standard library for mixing in real-life behaviours such as gravity or resistance. Our users will increasingly expect websites to behave like native applications so if we want to compete we have to implement everything ourselves on the client-side in JavaScript.

This can mean we end up with a lot of code but at the same time we can’t afford to make our sites clunky because we’ve known for a long time users expect feedback in around 100ms.

Stripping back the complexity, a basic draggable element is straightforward to implement - try moving the red square around in the example below (don’t worry, it works with a mouse too):

Moving the square around should be fairly smooth, it’s just one element on a blank surface and it’s much simpler than anything we’d be asked to build. But moving on quickly to a more complex test with gradients, transparency and shadows the performance can noticeably degrade on lower powered devices.

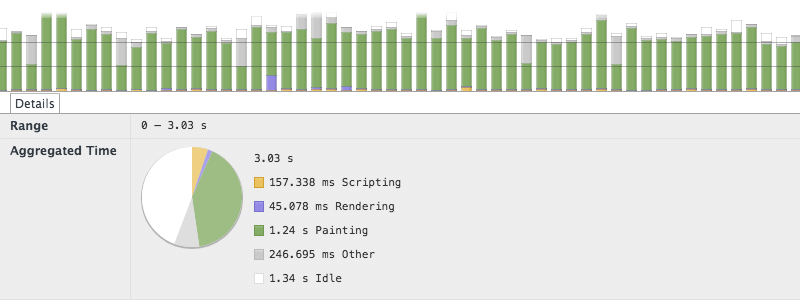

Using the timeline panel of the web inspector to diagnose the performance problem it’s easy to see what that problem is; each time the square is moved the browser is repainting the complex layers. If the interaction is going to be smooth then that has to be avoided.

Each time the element is re-positioned it’s being repainted.

To avoid repainting the element each time only requires a small change to the code; instead of changing the absolute position of the element it can be transformed using the translate3d CSS function. Browsers that support the property will attempt to switch to hardware compositing for the page and promote the element to its own accelerated context. In effect this places the square onto its own layer that can be shifted independently of the background.

The transform function also has a second benefit, it can move the element into sub-pixel positions. This means instead of snapping the element to the nearest pixel it can interpolate between them and maintain a smoother appearance overall.

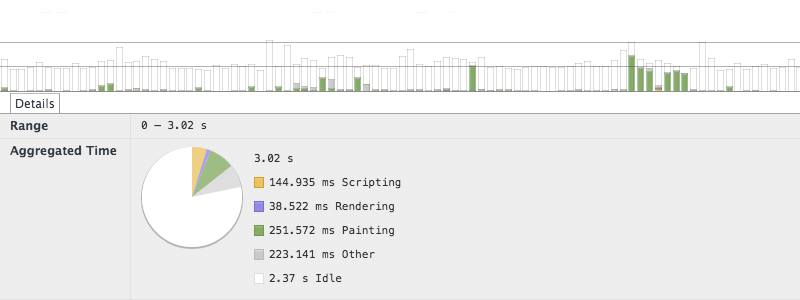

Employing the timeline panel again to review the difference we can see that the spent time painting has been reduced and the frame rate now remains comfortably at 60 FPS for most the recorded period:

Using a CSS 3D transform to create an accelerated layer avoids repainting an element each time it is moved.

The example code handling the interaction has so far been very basic, if it’s going to be useful then it needs to imply some meaning from the user’s movements. The following example tracks the current direction and velocity of the gesture, the distance the square has moved from its starting position, and updates that information on screen:

The example above can get quite janky on low powered devices, the extra work added to each event callback is apparently really slowing it down. Investigating with the timeline panel again it’s clear that although each callback is taking longer they’re not complex enough to make a device suffer.

The jank in this situation is actually being caused by the events not coinciding with the browser’s update rate. This was happening insidiously in the previous examples but the additional (relatively expensive) changes to the DOM required by the calculation displays are exacerbating it.

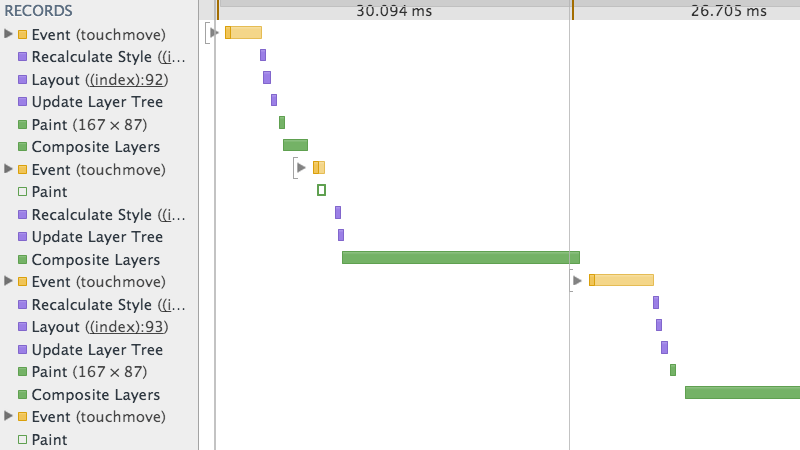

Because the events can be triggered in a continuous stream the code handling them is likely to bunch too much work into a single frame:

Multiple events can be processed unnecessarily in each frame, causing regular dips below 60 FPS.

The issue can be remedied by using the browser’s animation timing API which allows a callback to be scheduled to fit the next available frame. This stops the browser being dictated to by the event callbacks and allows it to decide when the best time is for it to make changes.

Switching to take advantage of animation timing is really simple, just store the values generated by each event in a buffer and move any slower calculations and DOM updates into a requestAnimationFrame callback:

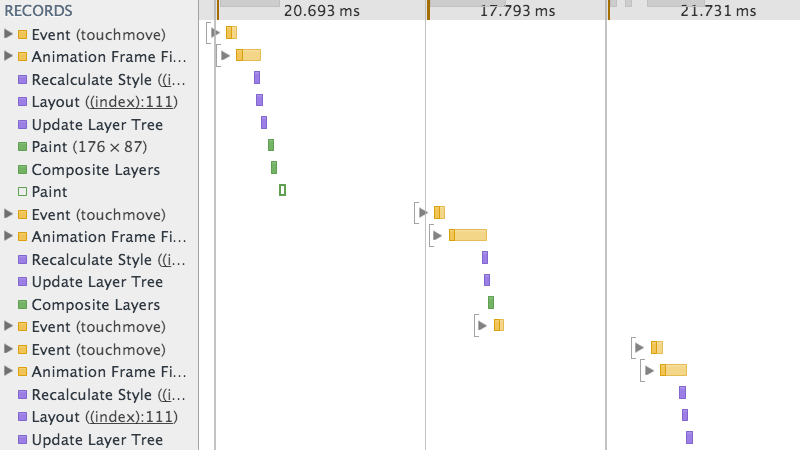

This pattern avoids doing unnecessary or untimely work and allows the browser to optimise its workload for each frame. The technique does add a little complication by making DOM changes asynchronous, if related code relies on knowing the state of the DOM or when change are made then more code will be required to handle that. But, as the timeline below shows–it’s totally worth the extra effort:

The animation timing API can distributed work evenly between frames leading to less work and smoother performance.

TL;DR

- Translate elements on their own compositing layer when necessary

- Ensure event callbacks are as lean as possible

- Buffer calculations and changes to the DOM with

requestAnimationFrame